Persona is not limited to enterprise dashboards or productivity software.

The same runtime model can also power embedded and simulated environments such as robots, devices, NPCs, and other world-facing experiences.

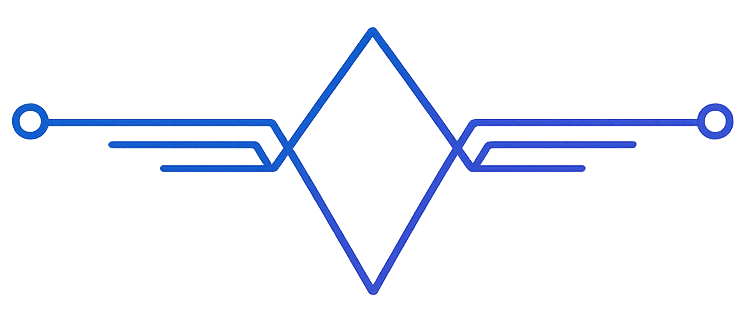

One brain, different bodies

The key idea is simple:

- the Persona brain remains the same

- the surrounding interface changes depending on the environment

That means one Persona can be expressed through:

- a virtual world NPC

- a robot or embodied device

- a simulation environment

- an in-product character

- a guided assistant inside a custom experience

Why this matters

Most AI integrations become brittle when they move outside a chat window.

Persona is shaped for the opposite direction: the intelligence stays coherent while the body or surface changes.

That makes it easier to build experiences where the user expects continuity, such as:

- a tutor that remembers progress

- an elder care companion that maintains routines

- an NPC that feels like the same entity over time

- an embodied agent that can revisit plans and follow up later

Practical design rule

Keep the Persona consistent, and let the environment define the available surfaces.

In practice that means:

- memory belongs to Persona, not to one screen

- planning belongs to Persona, not to one session

- actions depend on the environment, but intent remains tied to the same runtime

Where to start

The cleanest first version usually chooses:

- one environment

- one Persona

- one recurring use case

Examples:

- a single NPC role inside one world

- one robot assistant for one bounded routine

- one embedded tutor for one learning flow

That gives you enough shape to make the experience feel alive without overextending the first deployment.